Bill Chang, chief executive officer of Digital InfraCo, describes the initiative as part of what he calls an “AI grid” spanning data centres, networks and edge infrastructure — a system he likens to a power network.

In his analogy, large data centres act as generators supplying compute. Fibre and 5G networks function as transmission lines. Edge locations serve as substations. Last-mile fixed and mobile networks deliver AI workloads into enterprises.

The new CoE operates as a scaled-down version, or a “micro AI grid”, where organisations validate models, workflows and data pipelines before shifting them onto the main production infrastructure.

“[After] you test your ideas and solve the bottlenecks at [the micro AI grid or new CoE], you can seamlessly flip over to the [full] AI grid and get the resources you need when you go for full-scale deployment,” Chang says in a media briefing.

See also: Why the plumbing should come before AI agents

He declines to provide a precise figure but says the CoE represents a multi-million-dollar investment covering GPUs, facilities and headcount.

The facility, he adds, feeds into the company’s broader AI infrastructure business. “Our strategy goes beyond building state-of-the-art AI infrastructure. We have seen many organisations struggle to move from the excitement of AI to meaningful impact. [As such,] we are bringing the ecosystem together, one that transforms ambitions into real-world outcomes, and potential into lasting economic and societal impact – further contributing to advancing Singapore’s National AI imperative,” says Chang.

Tackling the pilot problem

See also: Kimi chatbot maker Moonshot AI valued at US$20 bil in Meituan-led round

According to Chang, infrastructure and operational complexity remain the main barriers to scaling enterprise AI.

Access to high-performance GPUs is limited, power density requirements are rising sharply, and many enterprises lack the internal capability to redesign workflows around AI rather than simply layering tools onto existing systems.

To address those constraints, the CoE will focus on four areas.

The first is preparing Digital InfraCo’s Nxera data centres for the next generation of Nvidia GPUs. The unit currently operates Nvidia’s GB200 systems at around 200 kilowatts (kW) per rack, roughly 20 to 25 times the density of conventional data centres that typically run at 6kW to 10kW per rack, shares Chang.

The next wave of Nvidia systems — codenamed Rubin Ultra and Feynman and expected between 2027 and 2029 — could require between 600kW and 1 megawatt (MW) per rack. These GPUs are critical for complex large language models to enable mission-critical AI workloads.

To manage that risk, Nxera has built what Chang describes as a stack combining thermodynamic engineering, Internet of Things-based monitoring of critical components and digital twin software for predictive management to deliver reliability as well as energy and water efficiencies. The company is also preparing for 800-volt direct current infrastructure to support higher-density deployments.

A second area is ecosystem integration. The CoE will bring together frontier model developers, vertical industry application providers, system integrators and digital service firms. This allows enterprises to test full-stack deployments rather than isolated proofs of concept.

To stay ahead of the latest tech trends, click here for DigitalEdge Section

The third focus is edge AI. As use cases shift from generative tools to robotics and autonomous systems, compute intensity increases significantly. A single robotics application may require multiple GPU engines running simultaneously — such as one for language processing, one for computer vision and another for spatial recognition. This places greater demands on low-latency networks.

“When you bring AI and networks together, you engineer it such that you can deliver the payload and the service level assurance. To do this at scale, networks become critical,” says Chang.

He adds that the Punggol Digital District, which is earmarked for autonomous systems and advanced technology testing, provides a suitable environment for developing such edge AI capabilities.

The fourth area is applied AI talent development. This aims to ensure that capabilities built within the CoE can transition directly to production infrastructure and support enterprise customers’ long-term adoption plans.

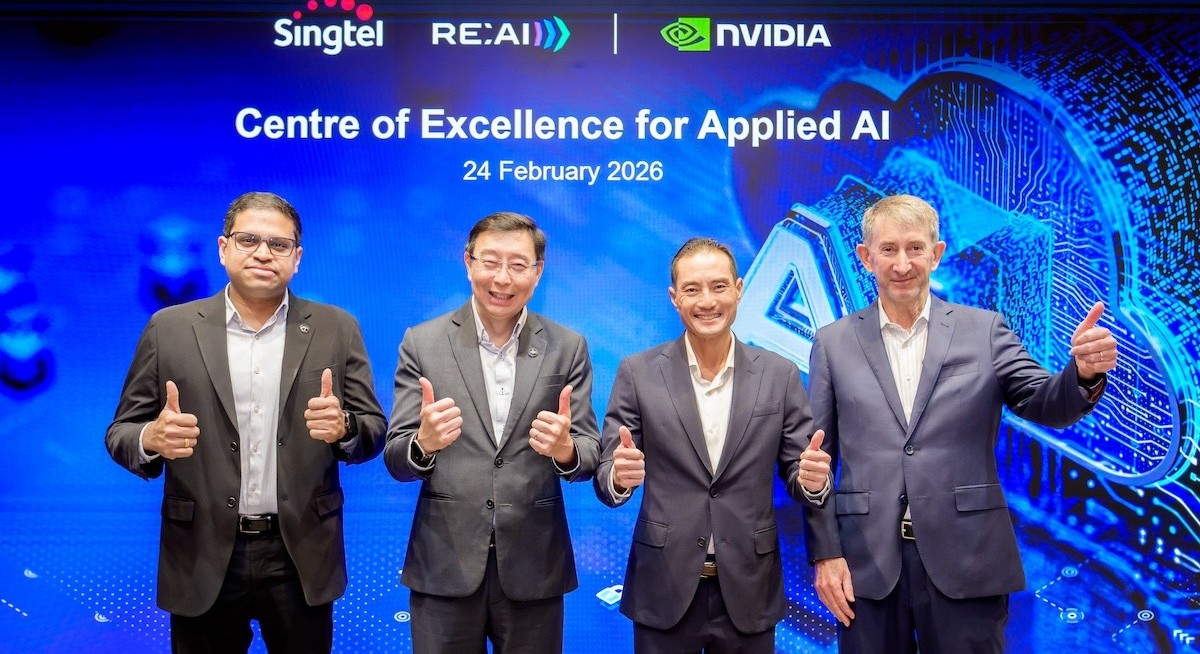

Senior Minister of State for Digital Development and Information Tan Kiat How, who was the guest of honour at the launch, underscores the workforce dimension. "The development and deployment of such AI-powered digital solutions in the real world cannot be done by AI alone. We need model makers, engineers, developers, data scientists and many more roles," he says.

Ronnie Vasishta, Nvidia’s senior vice president of Telecommunications, comments: "By combining Nvidia AI infrastructure with Singtel's sovereign AI cloud, we are enabling a secure and collaborative space where organisations and government agencies can progress from trials to scaled deployments with confidence. This initiative will empower businesses and public agencies to unlock new opportunities with AI, strengthen local talent, and drive impactful innovation.”

Commercial impact and scale

The CoE will initially target around 50 to 60 large enterprises and public sector agencies, including banks, healthcare providers, transport operators and research institutions. Many operate under strict data sovereignty requirements and cannot route sensitive workloads outside Singapore.

Chang expects the CoE to drive adoption of both Digital InfraCo’s GPU-as-a-service (GPUaaS) and AI-as-a-service (AIaaS) offerings. “[Large] companies have the means to consume GPUaaS. But smaller companies do not have all the tech resources to do that. They just want outcomes given by AI [and we can deliver that through our AIaaS].”

While this CoE is built around Nvidia’s stack, Chang says the broader AI grid is designed to remain flexible. “We're talking to different players like Grok and other GPU providers, but those are for outside of this CoE; they are for the stuff we do on our AI grid.”

The broader AI grid strategy rests not only on chip optionality but on scale.

Nxera’s operational and pipeline capacity is expected to more than double from 200MW in 2026 to over 400MW in the mid-term. About 40% to 50% is configured for high-density AI workloads, a share that the company is increasing toward 50% to 60% as demand rises. It also has access to an additional 250MW in Johor and Batam, linked via submarine cable, forming what Chang describes as the generation backbone of the broader AI grid.

The CoE complements Digital InfraCo’s RE:AI sovereign cloud platform, which underpins its GPUaaS and AIaaS offerings. Chang says demand for AI infrastructure remains strong, with much of its existing GPU capacity already committed to production customers.

Further expansion, he adds, will depend on customer uptake and access to sufficient power.