Only six in 100 companies that have deployed artificial intelligence (AI) have generated economic returns above 10%, says Vinayak HV, senior partner and leader of technology and AI practice for Asia Pacific at McKinsey & Company.

“[Success is not based solely on the] models which are learning,” he says, pushing back on the assumption that smarter AI alone drives better business outcomes. “It’s actually [based on the] organisation’s compounding capabilities.”

Contrary to two years of industry consensus that treated the AI model as the primary lever of competitive advantage, McKinsey’s research points to a set of conditions that sit beneath the model. Data must be organised in a way that systems can use. Infrastructure needs to support longer, multi-step tasks. Organisations require internal capabilities to build and scale AI beyond pilot projects, rather than treating it as an isolated tool.

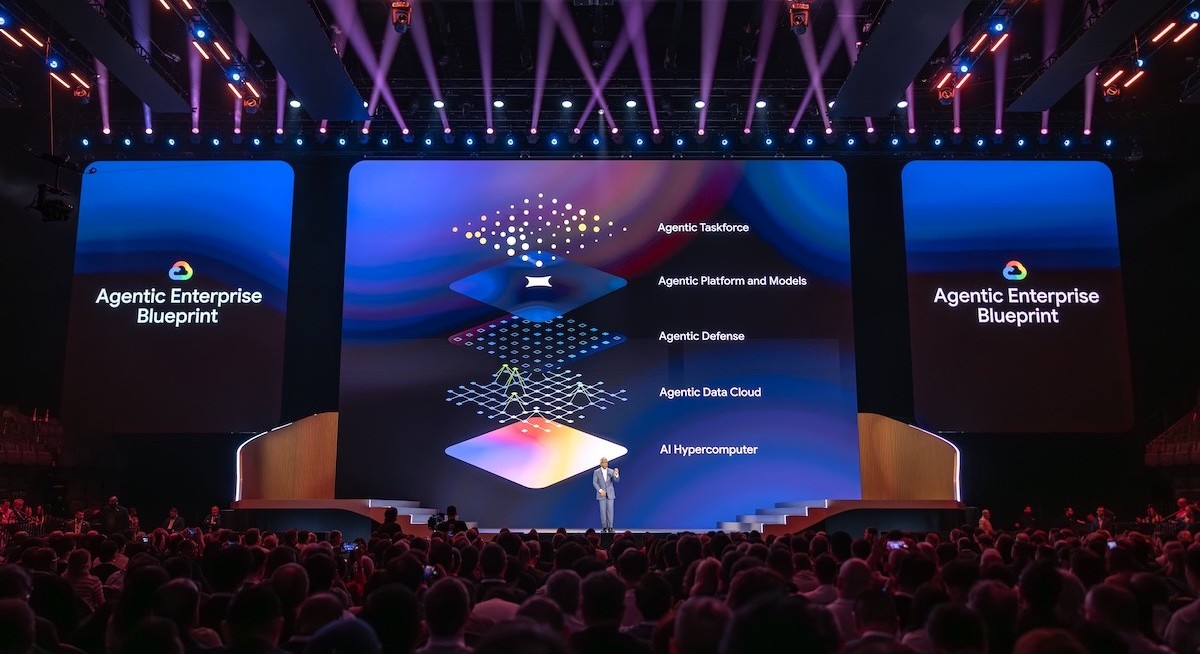

These themes surfaced in discussions at the recent Google Cloud Next event, where the company outlined new infrastructure, data and governance tools aimed at supporting enterprise deployment of AI agents. The announcements focused on helping companies move from experimentation to production, a gap that many organisations have yet to close.

Getting the foundation right

Bunnings, Australia’s largest home improvement retailer, illustrates what that transition looks like in practice. Its AI shopping assistant, Buddy, was introduced after years of investment in data and systems rather than at the start of its AI journey.

See also: Kimi chatbot maker Moonshot AI valued at US$20 bil in Meituan-led round

Vivek Pradhan, Bunnings’ general manager of data and AI, says the company spent about six years modernising its data foundations across a network of 500 stores and 55,000 employees. During the pandemic, it moved away from legacy on-premise systems and rebuilt its data platform in the cloud. It later established machine learning capabilities and a dedicated generative AI team before launching its customer-facing AI agent.

“What we were really looking to do is just work back from the customer problem of helping them complete their projects on time. A lot of customers who come into our stores are looking for practical DIY advice and product recommendations, and we were looking to scale that expertise through our online channel,” he shares.

What made that speed possible, according to Moe Abdula, Google Cloud’s vice president of customer engineering for Asia Pacific, was Bunnings’ move from data transformations to data products. A transformation is built for a single purpose and rebuilt for the next. A data product is packaged to be called by any application on demand. “The fact that they’ve been able to expose all of this and put them into API (application programming interface) motions and create data products, as opposed to data transformations, has really sped up the time in which they can create new applications,” he shares.

See also: AWS brings AI coding tool to Singapore schools to build job-ready skills

The early results are commercial. Pradhan says Bunnings is seeing increases in customer satisfaction and average order value, with more customers completing purchases remotely. “Customers have the trust in our agent powered by our DIY content, and they’re able to make purchases with confidence.”

The same approach is being applied internally. Staff use a conversational assistant on handheld devices, designed to reduce time spent on administrative work and keep employees on the shop floor. “What we want to do is increase the time our team spends on the shop floor serving customers and helping customers, rather than having to spend time doing administrative tasks in the back office,” says Pradhan.

Abdula called Bunnings’ data journey “a blueprint…for how firms should move data scientists from archaic managing clusters and infrastructure to actually innovating the data.”

The need to remember

Getting data in order is only part of the problem. An agent without persistent memory is functionally one that forgets every conversation when it ends. For a customer interaction, that means asking the same qualifying questions each time. For a complex internal workflow, it means losing the thread of a multi-step task when a session closes. This is not a model limitation. It is an infrastructure design choice, and it has a business cost most enterprises have not yet calculated.

Google’s answer is a new generation of chips designed specifically around this constraint, split for the first time into separate hardware for training AI models and for running them in production (also known as inference). Known as TPU 8t, the training chip is built for raw processing power at massive scale. Its counterpart, TPU 8i, is optimised for inference (including the continuous running of AI agents in production) and is designed to hold large amounts of information close at hand, so the agent does not have to start from scratch each time it is asked to act.

“As people build AI agents, they’re holding more things in memory. For inference, you need something called cache to hold things for long operations, which calls for a different design than you need for training,” says Thomas Kurian, Google Cloud’s CEO. Putting it simply, an AI agent that has to repeatedly fetch information from external storage is slower and more expensive to run than one that keeps it close at hand.

To stay ahead of the latest tech trends, click here for DigitalEdge Section

Google Cloud also launched Memory Bank and Memory Profiles, software features that give AI agents persistent memory across sessions entirely. In practical terms, an AI agent serving a wealth management client can recall that client’s portfolio, risk appetite and prior conversations weeks later without being told again. The underlying model is the same. The memory architecture is what makes it useful.

Citadel Securities, the capital markets firm, shows where this has direct financial logic. The company built a cloud-based quantitative research environment using Google Cloud’s infrastructure to run modelling workloads faster and at lower cost. In that business, speed determines how many hypotheses a team can test before competitors do. Every minute spent re-establishing context at the start of a session is a minute not spent finding an edge.

The lock-in question

Even if a company has its data in order and its agents running with persistent memory, a third problem remains: whether those agents can actually reach the systems where work gets done.

Most large organisations do not run on a single cloud or a single software platform. Data sits across multiple cloud platforms and on-premise environments built up over decades. Day-to-day operations run through dozens of business applications like Salesforce and SAP. An AI agent that can only operate within its vendor’s own environment is, in practice, an agent that cannot do much of the job. And a vendor that makes integration difficult is one that has effectively made itself hard to leave.

Karan Bajwa, Google Cloud’s president for Asia Pacific, acknowledges the tension directly. Rather than forcing scarce technical talent to be “plumbers trying to pull things together”, he says, the intent is to “offer optionality at every part of the stack”, with Google Cloud’s own chips and models available alongside third-party hardware and open-source alternatives, and an agent platform built to work inside the software companies already run.

This means more than 100 pre-built connectors to common enterprise systems, and support for the Model Context Protocol, an open industry standard that lets agents interact with other systems through a common interface. “If you have a bespoke system, you can bring that into the connector,” says Kurian. Google Cloud also announces a cross-cloud data architecture that lets agents query information sitting in other cloud platforms like Azure or Amazon Web Services (AWS) without having to move it. This is useful for organisations that have spent years accumulating data across multiple environments and have no appetite to consolidate it.

A separate but related concern is what happens as AI agent deployments grow. Running a handful of AI agents is manageable. However, running hundreds across an organisation raises oversight questions that most compliance and legal teams have yet to confront, especially when AI agents can access sensitive data, execute transactions or interact with customers.

An AI agent that acts outside its intended boundaries — whether by accessing the wrong data or approving something it should not — creates liability that is difficult to unwind. Google Cloud’s response is to assign each agent a unique identifier tied to a defined set of permissions, with every interaction logged centrally. Singapore’s DBS Bank is among the financial services institutions deploying the stack alongside Citadel Securities and Citi Wealth, where that kind of audit trail has regulatory as well as operational value.

From foundation to results

McKinsey’s research suggests the companies that generate returns are not defined by the technology they choose, but by what they build around it.

HV identifies four capabilities that separate organisations succeeding with AI from the rest. The first is anchoring on business value rather than technology for its own sake, with leadership setting the terms. The second is building AI talent internally as organisations “can’t outsource their greatness”, he says. The third is redesigning how the organisation operates to match the pace at which AI moves. The fourth is building feedback loops that let performance compound through use.

The aspiration, HV adds, is for every employee to operate with capabilities that would otherwise be out of reach. “It’s like equipping every employee in our organisation with, let’s say, a model which just won the marathon gold medal. What would that impact?”

Bunnings’ experience reflects that approach. Its agent was the result of decisions made years earlier about how to structure data, build teams and integrate technology into day-to-day operations, rather than a single deployment.