Let me cut through the jargon so you can understand what’s going on. RAMs or DRAMs are volatile memory that lose their data when power is cut off. DRAMs store your working memory — they are the chips that hold whatever your PC, tablet or smartphone is actively using right now, like open apps and browser tabs. Spot prices of NAND, or non-volatile flash memory, which retains data without power and is used mostly in solid-state drives (SSDs) and USB drives, are up more than 600% over the past year. But even contract prices have more than doubled.

SSD is a storage device used in PCs, laptops, tablets and other gadgets to permanently store data such as files, photos, software, operating systems and apps. SSDs have also replaced hard disk drives, which were once common in PCs and laptops. Your smartphone, gaming console and tablet use SSDs as well. As cars move from internal combustion engines to electric and semi-autonomous vehicles, DRAMs and flash memory are becoming key components. USB is a standardised technology for connecting, communicating with, and powering PCs, smartphones and external drives.

Memory chips were once dubbed “commodity chips” and were considered inferior to higher-end customised chips made by chip design giants such as Nvidia, Advanced Micro Devices (AMD), Qualcomm and Broadcom. Memory chip firms went from feast to famine as capacity was added and supply exceeded demand. Eventually, they learnt discipline and delayed adding capacity until it was really needed, which in turn helped prices. Now the AI boom has turned the memory chip business model upside down. Shortages are so acute that big memory chip firms are racing to add capacity, but demand is growing so fast that even if all the plants currently under construction get built in time, there will still be shortages.

Price spikes

See also: Anthropic releases AI model with weaker cyber skills than Mythos

So, what does all this mean? If you have been waiting to replace your PC or buy a new smartphone or tablet, you might want to go shopping for one now before prices go up even more over the next few months. Last year, memory chip prices accounted for 5% to 7% of the total cost of PCs or laptops. Right now, they account for about 10%. Analysts expect that memory costs could soar to as much as 15% of total PC costs by the end of this year. If you are in the market for a laptop that costs US$1,200 ($1,519) today, you might have to pay up to US$100 more if you wait until the end of the year. And if shortages persist, chip prices could go much higher. A PC or laptop could cost an additional US$200. A smartphone might cost even more. Just as power shortages forced by the surge in data centre buildout are pushing up utility costs, every electronic gadget you own, from your phone to PC to game console and even your next car, is likely to cost more as memory chip shortages become more severe.

UBS now forecasts DRAM prices per gigabyte could increase 289% between 2025 and 2027, surpassing the 89% rise in the previous 2017–2018 cycle, while NAND flash memory is currently up 144%, surpassing the 92% rebound in 2024. “Stronger AI and server demand plus rising prices are lifting forecasted DRAM and NAND flash revenues in 2026 to US$566 billion and in 2027 to US$788 billion, staggeringly above the US$153 billion peak in 2018,” the Swiss bank noted in a recent report. “The sharp increase is contributing to the strong upward rise in hyperscale capex chasing strong AI demand but driving headwinds to PC and smartphone units and brands to pass through or absorb costs,” the report noted. UBS estimates that higher memory prices could add up to US$100 billion to hyperscale capex in 2026 and 2027.

Little wonder, then, that it is being called “RAMmageddon” by users and “memory supercycle” by chipmakers. DRAM prices jumped 75% between December 2025 and the end of January 2026, driven largely by AI data centres, which consumed almost 70% of all memory chips manufactured in 2025. With so much of the production going to AI data centres, there just aren’t enough memory chips left for PCs, laptops, smartphones, gaming consoles, cars or industrial equipment. DRAM maker Micron recently reported that high-bandwidth memory (HBM) and cloud-related memory rose from 17% of its DRAM revenue in 2023 to nearly 50% in 2025. As chipmakers reallocated production capacity toward the more lucrative HBM for AI graphics processing units (GPUs), the supply of consumer and enterprise DRAM began to dry up. The DRAM in your PC, smartphone, or EV costs about seven times as much as HBM4, which data centres are using. Can you blame Hynix or Samsung for prioritising data centres over phones or cars? iPhone maker Apple’s CEO Tim Cook and electric vehicle pioneer Tesla’s CEO Elon Musk recently sounded the alarm as the crisis rippled across everything from automobiles to smartphones.

See also: EU tells Google to share search data with AI rivals in proposal

For his part, Musk warned that memory chips and other components will likely fail to keep up with demand in the coming years. “We’ve got two choices: Hit the chip wall or make a Fab,” he said last month. The world’s richest man has long wondered whether he should build a huge TeraFab in the US to produce both memory chips and GPUs to meet soaring demand. He believes the US might need a wafer fab with a capacity of up to one million wafers a month. Such a plant could eventually cost over US$200 billion — probably the most expensive facility ever built.

With hyperscalers such as Amazon.com, Microsoft, Google, Meta Platforms and Oracle expected to spend over US$700 billion on capex this year, most of it on AI infrastructure, demand for everything that helps make AI chips work goes up. A key component is HBM, a stacked, high-speed type of DRAM designed specifically for AI processors like Nvidia’s GPUs to move massive amounts of data extremely quickly.

The “Big Three” memory chip makers — Samsung, Hynix and Micron Technologies — collectively control 93% of the global DRAM market, with three small Taiwanese players — Nanya Technology, Winbond Electronics and Powerchip Semiconductor Manufacturing Corp (PSMC) — sharing the rest. That extreme concentration is why supply disruptions or demand spikes in one area, such as AI, can ripple across the entire memory market very rapidly. For AI data centres, HBM is the “memory bandwidth wall”. Transformers and other neural networks require moving large amounts of data, such as model weights and activations, between memory and compute. Diffusion models like Stable Diffusion that can create photo-realistic images from text descriptions, and Sora, OpenAI’s text-to-video application, also benefit from HBM on high-end GPUs. HBM is considered a strategic material. GPU maker Nvidia now allocates HBM supply tightly and CEO Jensen Huang has called memory chips a key constraint on AI chip production.

While Samsung is a clear leader in memory chips with 34% global market share, followed closely by Hynix with over 33% and Micron a distant third with 26%, in HBM, Hynix has carved its own niche and become the top supplier to Nvidia. In 2Q2025, Hynix had a 62% share of HBM production while Samsung trailed at 17%. Over the past few months, Samsung has cut production of other kinds of memory chips and boosted production of higher-end HBM chips. Hynix’s share of HBM chips has fallen to around 50% while Samsung’s has risen to 39%.

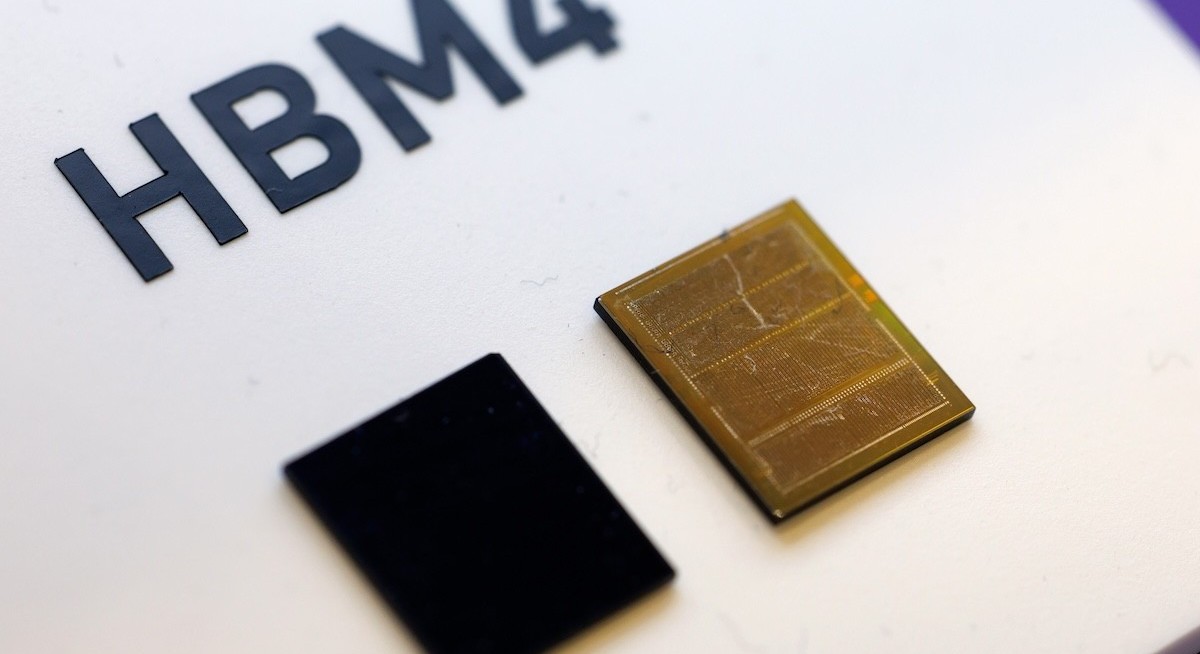

The newest high-bandwidth chip is HBM4, which will power the next generation of AI and HPC (high-performance computing) hardware, offering roughly double the bandwidth and capacity of HBM3E chips. In January, Hynix showcased a 16-high HBM4 stack (48GB) with a speed of about 11.7 GBps (translating into a 3TB/s bandwidth) to target next-gen AI accelerators and GPUs. AI training and inference workloads, such as Nvidia’s Vera Ruben, AMD’s Instinct MI500 Series GPU, and custom AI chips from Google, Amazon and Microsoft that Broadcom is helping them build, are increasingly limited by memory bandwidth. HBM4 has been designed to keep up with the growing compute demands of large AI models.

The NAND flash memory market is slightly less concentrated than the DRAM market. Samsung has a 40% share of the market and Hynix has a 27% share. Three other players, Japan’s Kioxia (literally “memory” in Japanese), Micron, and SanDisk, each have between 10% and 12%. Flash memory across the AI ecosystem includes Edge AI devices such as smartphones, cameras and sensors, which store models locally on NAND flash so inference can run without a network connection. Flash memory is also used in AI accelerator cards for firmware, boot code, and sometimes model weights that need to persist across power cycles, as well as in embedded AI chips such as those in smart speakers or wearables, like smart glasses or AI-powered earbuds, which rely on it to store compact neural network models.

Massive imbalance

Sink your teeth into in-depth insights from our contributors, and dive into financial and economic trends

Goldman Sachs expects DRAM supply tightness and elevated pricing to continue for at least two more years. “The IT hardware industry is entering a structural scarcity phase as AI infrastructure demand triggers a massive supply-demand imbalance in the memory sector,” Goldman analysts noted in a recent report. “DRAM and NAND are experiencing multi-month lead times reminiscent of the Covid-19 era, with HBM particularly constrained due to packaging complexity and yield learning curves,” they said. This scarcity is being exacerbated by supply discipline among major players — Hynix, Samsung and Micron — which has an outsized impact on low-end consumer electronics and non-AI segments that are struggling for allocation, the report noted.

Beyond the constraints in DRAMs, the AI ecosystem also faces backlogs that could last months or even years across several critical data centre physical infrastructure components, including turbines, transformers, advanced packaging, power supplies, liquid-cooling components, fans, optical switches, fibre and chassis. If that delays the massive buildout of data centres, it helps memory chip supplies catch up with demand.

The surge in memory chip prices is boosting the bottom lines of two Korean chip giants. Samsung (which in Korean literally means “three stars”) is now the 12th-largest listed firm in the world, just ahead of retail Goliath Walmart, with a market capitalisation of over US$1 trillion. Bank of America expects Hynix’s profits, which were up 116% last year, to soar another 93% this year to US$57.5 billion. Hynix is now the world’s 21st-largest listed entity, with a market value of US$505 billion. Over the next few years, the two Korean chip giants will become even bigger global players.

Assif Shameen is a technology and business writer based in North America