At a sufficient scale, building AI on top of third-party silicon becomes a structural problem. Compute is expensive, supply is constrained and others set the pricing. Every hyperscaler running large AI workloads faces some version of this constraint.

Microsoft has spent nearly a decade engineering around it. A custom CPU, a custom inference chip, AI-dedicated data centres, and a quantum computer under construction are the results of that effort, with several now moving toward commercial deployment. Taken together, the moves suggest Microsoft is steadily assembling more of the infrastructure behind its computing platform itself, from the processor to the facility.

Different chips for different workloads

Jessica Hawk, Microsoft’s corporate vice president for Azure product marketing, puts the commercial problem plainly. AI workloads are not uniform. Training a frontier model, running inference on a customer query and processing large-scale analytics require different kinds of compute. Routing all of them through the same premium GPU can quickly become an expensive default.

Microsoft, therefore, thinks about its infrastructure as what Hawk calls a “token factory”, a system designed to match each workload to the most cost-efficient compute available, rather than defaulting to the most expensive option. Customers, she argues, will ultimately judge AI platforms on that. “Every customer is going to be looking for price performance, and not every workload is the same,” says Hawk.

That approach has led Microsoft to expand both its model catalogue and its chip options. “We have more AI models in our catalogue than any other hyperscaler, and we also have [a wide] chip diversity,” she says, adding that the company continues to work closely with chip providers, including Nvidia and AMD, while developing its own silicon.

See also: Three strategic investments for the chief information officers in 2026

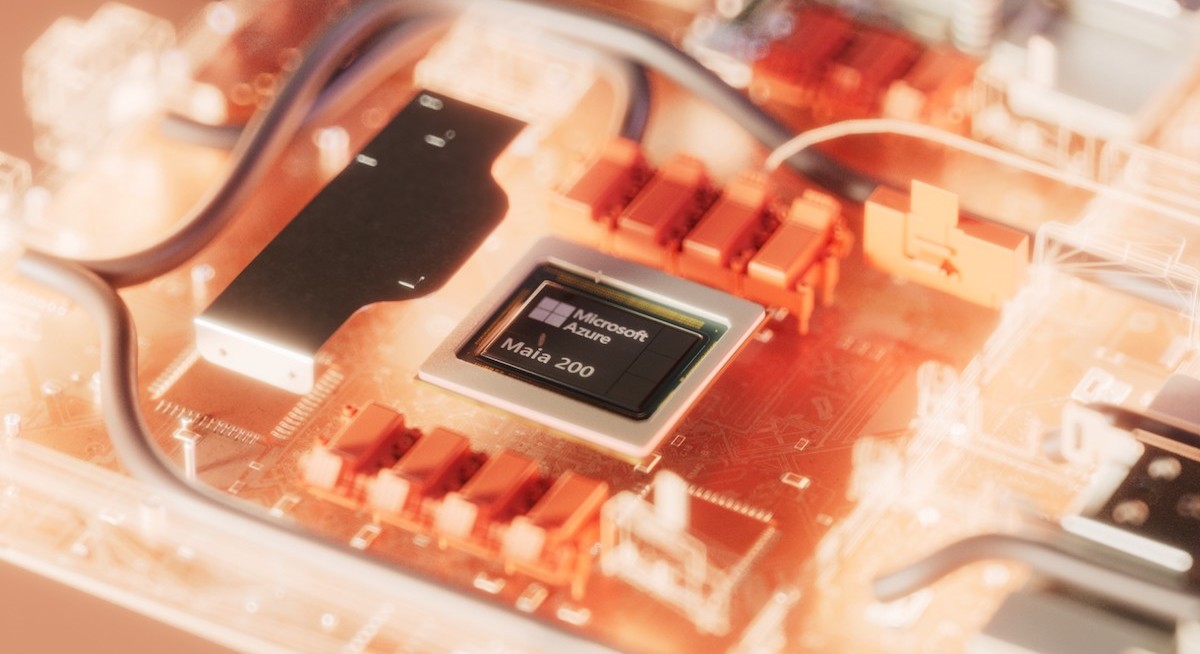

In practice, that means using different processors for different jobs. High-end GPUs from third-party vendors remain the workhorse for training frontier AI models. Azure Cobalt 200, Microsoft’s ARM-based CPU, is designed for cloud-native workloads. Maia 200, Microsoft’s custom AI accelerator, focuses on inference, the high-volume task of running trained models.

The first Maia 200 deployments went live in Microsoft’s Iowa data centre earlier this year, handling Copilot workloads and an expanding set of partner applications. By handling more AI computing on its own silicon, Microsoft gains greater control over the cost of its Azure AI services.

Quantum ambition

See also: Vibe, then verify: Unlocking AI value by solving the productivity paradox

Alongside CPUs, GPUs and AI accelerators, Microsoft sees the quantum processing unit (QPU) as a fourth category of compute. “QPUs do not replace CPUs and GPUs — they augment the other two and provide another form of compute. In the future, compute is hybrid,” says Jason Zander, executive vice-president of Microsoft Discovery and Quantum.

Microsoft is not alone in chasing quantum computing. IBM, Google, IonQ and Quantinuum are among dozens of tech companies building quantum processors, each through a different hardware approach. Microsoft’s bet is on topological qubits, which differ from most competitors’ designs in a fundamental way.

Most quantum computers today store information in particles that are highly sensitive to their surroundings, which makes errors hard to avoid. In contrast, Microsoft’s topological qubits approach aims to encode information in a way that is inherently more stable and easier to protect from noise. The company argues that this makes the technology more practical to scale, even though it remains in its early stages.

In February 2025, Microsoft unveiled Majorana 1, its first quantum chip. The underlying material is a topoconductor, a new class of material engineered from indium arsenide and aluminium, built atom by atom and cooled to near absolute zero. Microsoft says it is the basis for making topological qubits manufacturable rather than merely theoretical. While the chip currently holds eight qubits, the roadmap targets one million on a single chip small enough to fit in the palm of a hand.

Why one million qubits? At that scale, quantum machines could solve certain types of problems in chemistry, materials science and other industries that are impossible for today’s classical computers to solve accurately. For instance, they could help explain why materials crack or corrode, identify catalysts to break down microplastics or capture carbon, and simulate enzyme behaviour well enough to improve how drug developers’ ability to predict whether compounds will metabolise in the body.

Microsoft’s Majorana 1 quantum chip is built on a new material designed to make topological qubits manufacturable. It currently holds eight qubits, with a target of one million on a palm-sized device. Photo: Microsoft

To stay ahead of the latest tech trends, click here for DigitalEdge Section

Yet, companies do not need to wait for that threshold to start seeing value. Unilever has been using Microsoft’s Azure Quantum Elements platform — which combines AI and classical high-performance computing with early quantum simulation — to cut R&D timelines from years to months. The platform is not yet quantum in the full sense, but it is the commercial infrastructure into which deeper quantum capabilities could be added as the hardware matures.

The hardware is already maturing faster than most expected. Magne is a full-scale quantum computer under construction in Copenhagen for QuNorth, a Nordic initiative backed by the Novo Nordisk Foundation and Denmark’s state investment fund. It is intended to give Scandinavian researchers and life sciences companies early access to fault-tolerant quantum computing.

Microsoft is building it with Atom Computing, an American start-up that stores quantum information in individual atoms held in place by laser grids. Although the hardware differs from Microsoft’s own topological approach, it uses the same Microsoft software and error-correction layer on top. In Zander’s framing, the qubit type matters less than the platform on which it runs.

In November 2024, Microsoft and Atom said they had demonstrated 24 reliable, error-corrected logical qubits working together, a proof of concept for what Magne now intends to scale up. The system is expected to house around 50 logical qubits and to become operational in late 2026 or early 2027.

Magne’s timeline also puts a more concrete date on a cybersecurity risk that many enterprises still treat as distant. Most internet encryption today relies on mathematical problems that classical computers cannot solve within a reasonable timeframe. However, quantum computers, at sufficient scale, could crack those in hours.

Research from Microsoft and others has already revised down the qubit count needed to break widely used RSA encryption from roughly 10 million to around 600,000, within the range of machines now on roadmaps. Researchers call the moment that becomes possible Q-Day. “I would put Q-Day between five and 10 years. You should not assume you’re safe. Fix your websites. Re-encrypt. Update your critical infrastructure now,” warns Zander.

Sovereignty questions

As governments across Asia place greater weight on data control and regulatory jurisdiction, sovereignty is becoming central to decisions about where sensitive AI and quantum workloads should run. Zander’s answer was that quantum would follow the same model as cloud computing. “When we have these new [quantum] computers, Microsoft will put them into our data centres along with the rest of our compute,” he says, adding that the company would deploy them in-country and under local regulations where required.

In effect, Microsoft wants customers to access all these computing tools through the same Azure platform, whether the workload runs on its own chips, third-party GPUs or, eventually, quantum hardware. This is not a collection of side bets. It is a long-running effort to control more of the infrastructure behind AI and advanced computing, while keeping it deployable under local rules. With new systems already being built, that future is starting to look close enough for customers to plan around.